SARA: Selective and Adaptive Retrieval-augmented Generation with Context Compression

A hybrid RAG framework that combines natural-language snippets with semantic compression vectors — preserving fine-grained facts while extending effective context.

A hybrid RAG framework that combines natural-language snippets with semantic compression vectors — preserving fine-grained facts while extending effective context.

Retrieval-augmented generation (RAG) extends large language models (LLMs) with external knowledge, but it must balance limited effective context, redundant retrieved evidence, and the loss of fine-grained facts under aggressive compression. Pure compression-based approaches reduce input size but often discard fine-grained details essential for factual accuracy. We propose SARA, a hybrid RAG framework that targets answer quality under fixed token budgets by combining natural-language snippets with semantic compression vectors. SARA retains a small set of passages in text form to preserve entities and numerical values, compresses the remaining evidence into interpretable vectors for broader coverage, and uses those vectors for iterative evidence reranking. Across 9 datasets and 5 open-source LLMs spanning 3 model families (Mistral, Llama, and Gemma), SARA consistently improves answer relevance (+17.71), answer correctness (+13.72), and semantic similarity (+15.53), demonstrating the importance of integrating textual and compressed representations for robust, context-efficient RAG.

Averaged over in-domain datasets with Mistral-7B as the QA backbone.

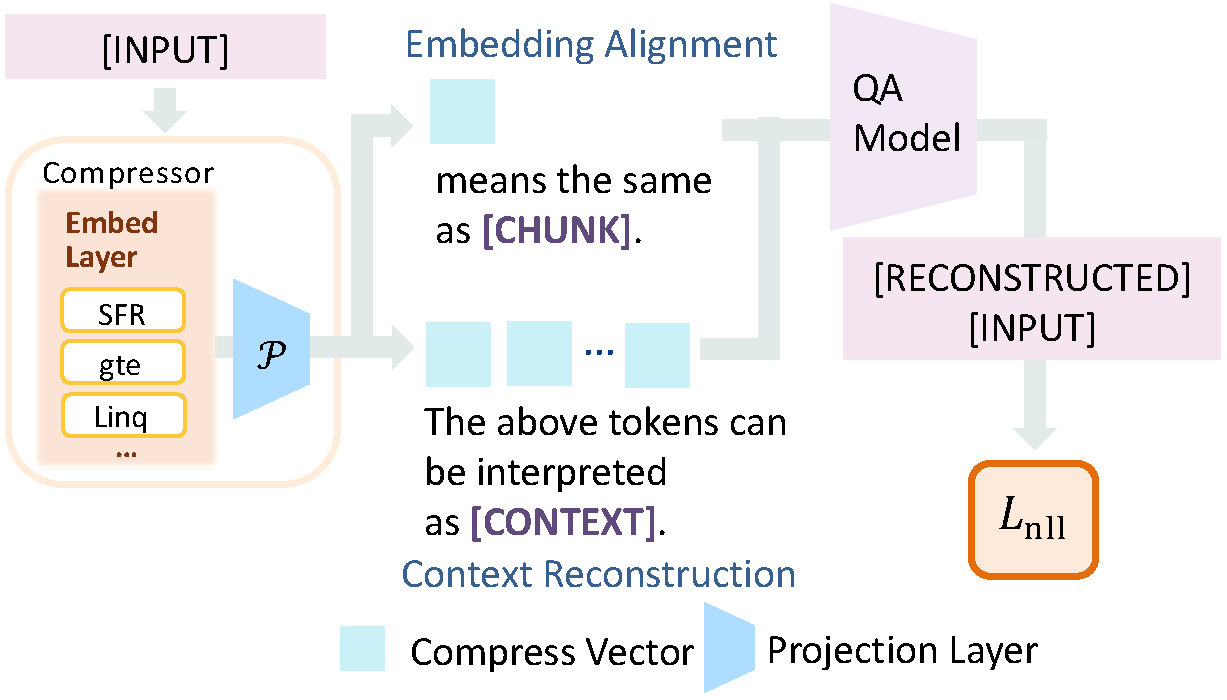

SARA learns a compressor that encodes evidence into single-token semantic vectors, then adapts the LLM to reason over a mixture of natural-language snippets and compressed contexts.

Balances local precision via natural-language spans with global abstraction through compression vectors — fine-grained reasoning under strict token budgets.

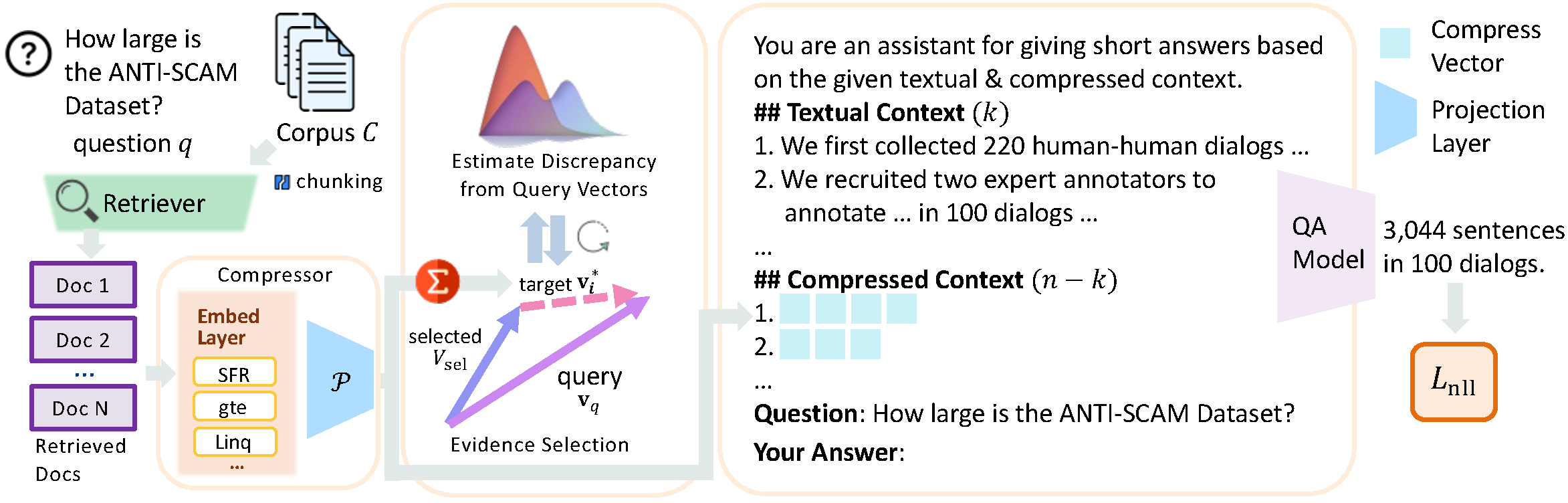

Compression-vector-based selection dynamically reduces redundancy and prioritizes query-relevant evidence using embedding novelty and CSI scoring.

Works across 5 open-source LLMs spanning 3 families (Mistral, Llama, Gemma) and generalizes to multiple retrievers — no architectural changes required.

SARA outperforms competitive RAG baselines and dedicated context-compression methods across both lexical and LLM-based metrics.

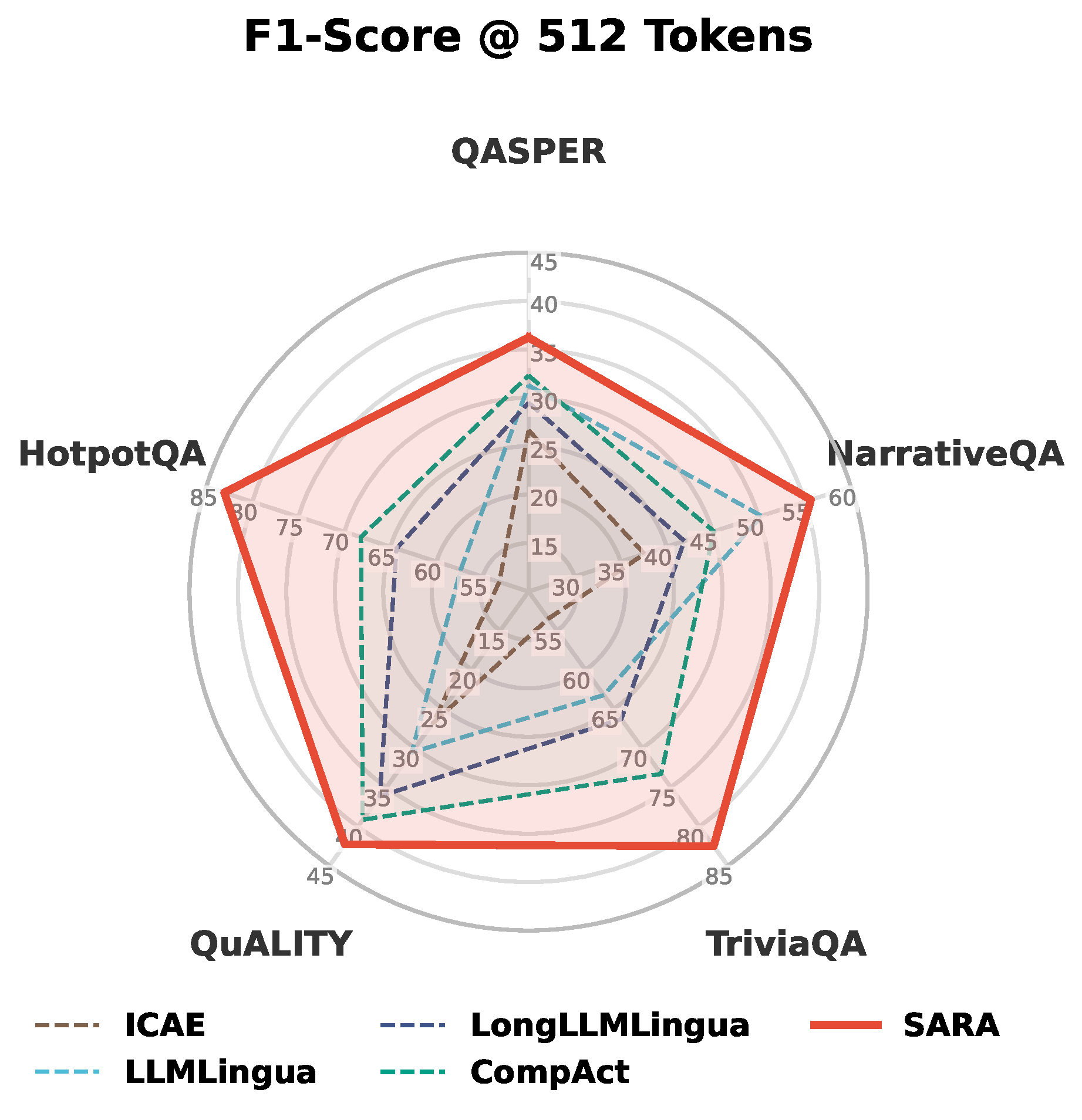

Context efficiency at a 512-token budget. SARA dominates compression-based methods across every dataset. The hybrid representation — natural-language plus compression vectors — yields large gains on knowledge-intensive tasks.

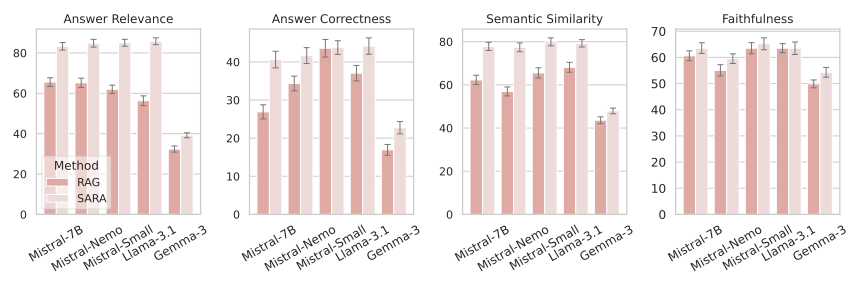

Generalization across LLMs. SARA improves performance for every backbone tested — Mistral-7B, MistralNemo-12B, MistralSmall-24B, Llama-3.1-8B, Gemma3-4B. 7B models with SARA match the performance of 24B baselines.

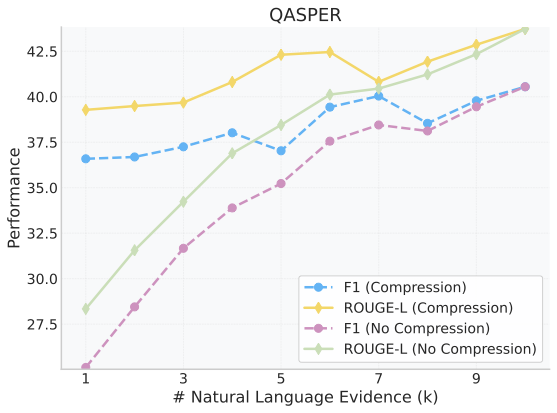

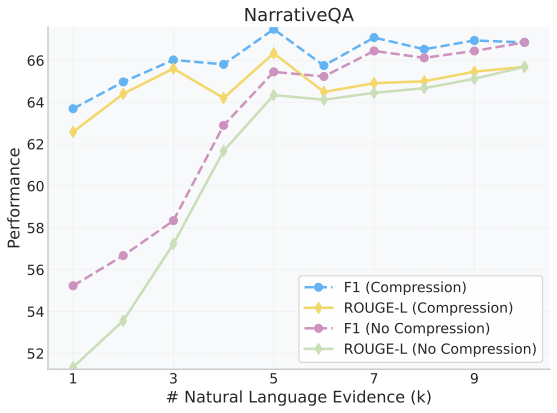

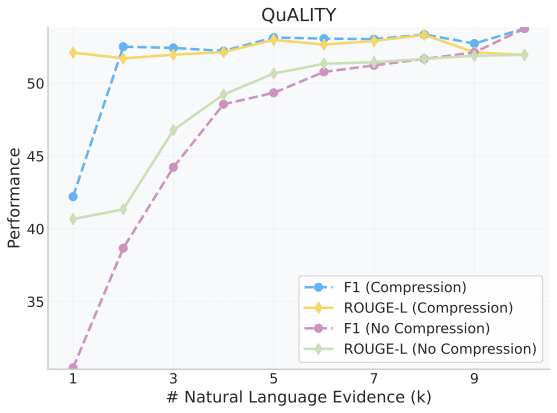

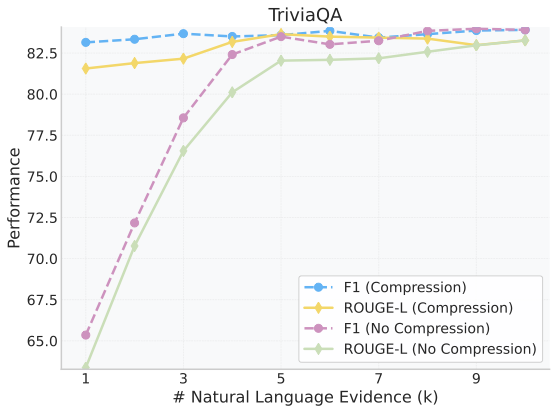

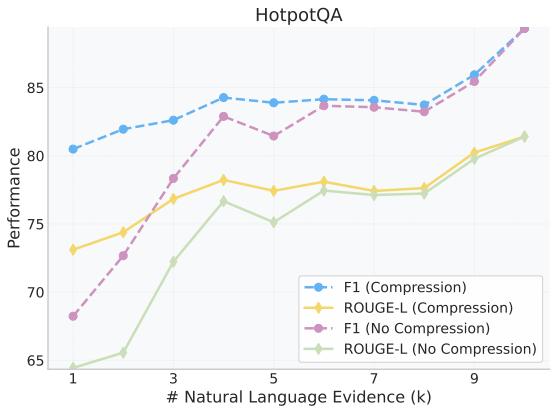

Holding the total context size fixed at N=10 and sweeping the number of natural-language passages k, performance peaks around k=7–8. Even at k=1, compression vectors close most of the gap to the full text setup — confirming that vectors add real signal at minimal cost.

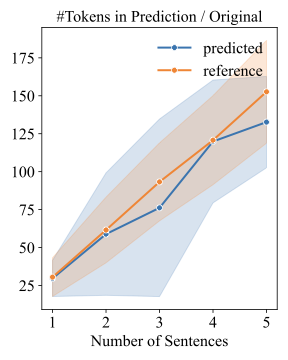

Decoded text from a single compression token recovers a span comparable to a 3-sentence reference, capturing entities and numerical values with high fidelity.

Compression effectiveness. Probability density of recovered token counts for decoded evidence and the reference 3-sentence budget — the decoder tracks the reference distribution closely.

@inproceedings{jin2026sara,

title = {SARA: Selective and Adaptive Retrieval-augmented Generation with Context Compression},

author = {Jin, Yiqiao and Sharma, Kartik and Rakesh, Vineeth and Dou, Yingtong and Pan, Menghai and Das, Mahashweta and Kumar, Srijan},

booktitle = {Proceedings of the Annual Meeting of the Association for Computational Linguistics (ACL)},

year = {2026}

}